Blog/

What are containers and why are they different from VMs

Containers consist in a runtime (configuration between hardware and software) that containerizes an application and all its dependencies, such as libraries, configuration files and other binaries in a single package, known as an image.

When the image of an application is created, differences between the distribution of the O.S. (Operating System) and other layers of the infrastructure are abstracted, solving one of the biggest problems of how to run a software: How to make an application work reliably in different environments.

What problems can Containers solve?

Delivery time: Containers can be created and deleted within seconds, meaning that they can be instantiated “just-in-time” since it is not necessary to initialize a whole O.S. for each new container.

Portability: Containers isolate services of an application. With that, it’s possible to move your app freely between environments, even when the server has a different Operating System.

Configuration: Changes can be made individually in each container automatically without the need of rebuilding the entire application. Because containers are lightweight, they can be instantiated when needed and are available almost immediately.

Differences between Containers and Virtual Machines

There are many differences between Containers and VMs, here are the most important:

Operating System

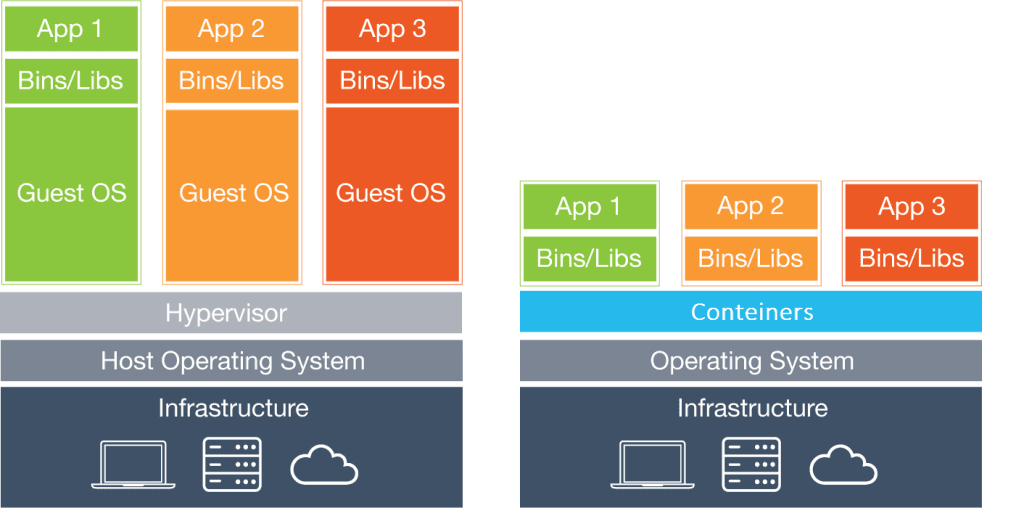

The architecture of containers and virtual machines are different in terms of the Operating System in the sense that containers are hosted on a server with a single O.S. (the host O.S.) shared among them.

Virtual machines, on the other hand, have the host O.S. of the physical server where they are, and a guest O.S. on each of the VMs. The O.S. guest is independent of the host O.S. and might be different from one to another.

In practical terms, containers are most commonly used when you want to run applications in the same kernel. However, if you have applications or services that need to run on different Operating System distributions, VMs are usually required.

The sharing of the host’s O.S. between containers make them become very light, which reduces boot time. Because of this, the overhead (amount of physical resources required on the server) to manage a container system is much smaller when compared to VMs.

Security

Because the host kernel is shared between containers, container technology has access to the kernel’s subsystems. As a result, a vulnerability in the application can compromise the entire host server. Because of this, giving root access to applications is not recommended.

On the other hand, VMs are unique instances with their own kernel and security settings. They can, therefore, run applications that need higher permissions.

Portability

Each image in a container is a standalone package that runs an application or part of it. As a separate guest O.S. is not required, this image can be moved between different platforms.

Containers can be started or stopped in a matter of seconds when compared to VMs due to their lightweight architecture. This makes it easier and faster to deploy containers to servers.

VMs, on the other hand, are isolated instances running their own Operating System. They can not be moved between platforms without a careful migration process being done.

For the purposes of developing the application or service where applications must be developed and tested in different environments, containers are the best option.

Performance

Containers are significantly lighter than VMs, thus requiring fewer resources.

As a result, container’s boot is much faster, since virtual machines need to load an entire Operating System to be initialized.

Another major difference is that the use of features like CPU, memory, I/O, etc., vary depending on the load or traffic on it. Unlike VMs, it is not necessary to allocate permanent resources in a container.

Because of this, it is possible to say that this technology is way more scalable.

Conclusion

Containers are considered the evolution of VMs and are being adopted by companies of all sizes.

Its flexibility and lower resource requirements make it a more complete choice when it comes to deploying and managing your applications.

Despite being a technology less mature than conventional virtualization, it has developed rapidly and is already the standard choice for the workloads of large companies such as Google and Walmart.