Building a private cloud on bare metal gives you both flexibility and control. It provides cloud-like agility with the dedicated performance of physical servers. This keeps you in charge of your infrastructure, avoiding the limitations of public cloud providers. In this guide, we’ll walk you through setting up your own private cloud on the Latitude.sh platform using Harvester, an open-source hyper-converged infrastructure (HCI) solution. Harvester simplifies managing virtual machines and Kubernetes clusters on your servers, giving you a unified system that supports cloud-native workloads from the core to the edge. So let’s jump right in!Documentation Index

Fetch the complete documentation index at: https://www.latitude.sh/docs/llms.txt

Use this file to discover all available pages before exploring further.

Requirements

- 1 Latitude.sh server (3 recommended for high availability, but not required)

- Harvester iPXE script for cluster setup

- IP address range to assign to your VMs (at least 1 /29 block for both cluster and VM, or 2 /29 blocks to separate traffic between them)

Step 1: Prepare the iPXE script

Get the iPXE script

Go to the Latitude.sh repository, or copy the script below to automatically install Harvester on your server:

Configure the Harvester config file

For the first node, configure the HARVESTER-CONFIG-FILE.yaml shown below, replacing the placeholders with your environment details.To replace the placeholders in the HARVESTER-CONFIG-FILE.yaml, follow these steps:

- CLUSTER-TOKEN: This is a unique token used to create the cluster and join new nodes. You can generate a random string (e.g., openssl rand -hex 16) or create a meaningful token.

- HOSTNAME: Replace this with the hostname of your server. This can be anything meaningful to you, like node1, harvester-master, etc.

-

SSH_PUBLIC_KEY: Enter your public SSH key here. This allows you to log in to the server via SSH after installation. You can find your public key by running

cat ~/.ssh/id_rsa.pubon your local machine. - ENCRYPTED_PASSWORD: Replace this with an encrypted password (hashed with bcrypt, for example). You can generate this using a password generator or encryption tool like openssl.

-

INTERFACE_NAME: Set this to your network interface name (e.g., eth0, enp1s0, etc.). You can find this by running

ip aorifconfigon your server to identify the network interface name. - PUBLIC_IP: This should be the static public IP address assigned to the server. It’s the IP you want to use for the management interface.

- PUBLIC_SUBNET: Replace this with the subnet mask of the network your server is in.

- PUBLIC_GW: This is the gateway IP address for your network, typically the IP address of your router.

-

BLOCK-STORAGE-DEVICE: Specify the storage device where Harvester should be installed. This is typically something like

/dev/sdaor/dev/nvme0n1, depending on your server’s disk layout. - VIRTUAL-IP-FOR-CLUSTER-MANAGEMENT: This is the virtual IP (VIP) that will be used for cluster management. It must be an unused IP in the same subnet as your public IP and dedicated for cluster management.

Step 2: Request additional IPs

Depending on the number of VMs and services you plan to deploy, calculate the required IP addresses. If you’re running out of available IPs, you may need to request additional IPs.Submit IP request

Go to Networking > IP Addresses on the Latitude.sh dashboard to request additional IPs:

- Select the required number of IPs. Request at least 1 /29 block for both cluster and VM, or 2 /29 blocks to separate traffic between them.

- Provide a justification for the request (e.g., setting up a Harvester-based private cloud)

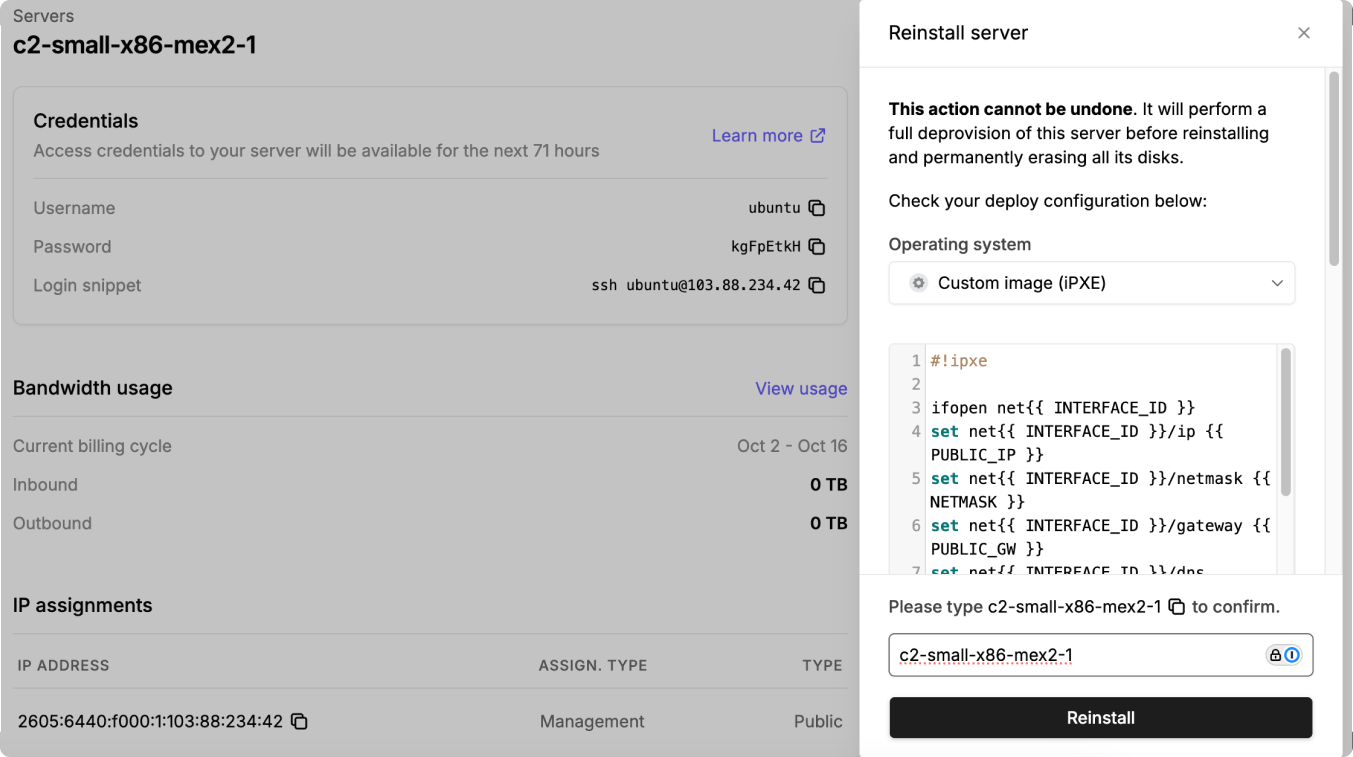

Step 3: Boot the server

In the Latitude.sh dashboard, click Reinstall from the server Actions menu, choose Custom image (iPXE) as the OS, and paste your modified boot.ipxe script with the URL of your customized Harvester configuration file. This will automatically install Harvester on the server and configure it as the management node for your cluster.